Our Projects: Search platform with automatic annotation and classification of documents

Our team has developed a platform that combines intelligent search, automatic annotation, and document classification systems.

The Problem

Sooner or later, many organizations face the challenge of efficiently searching for documents and quickly accessing the information they need. When hundreds of thousands of unstructured files and documents accumulate in a corporate repository, finding the information you need can be difficult and time-consuming.

Our analysts conducted a study and came to the following conclusions:- Almost every company has more than 80% of its data in an unstructured format.

- There is no common interface for finding information; one must search multiple data warehouses one after the other.

- Search is inefficient because enterprise tools often do not understand natural language, are not typo-tolerant, and require multiple search attributes.

Document search should be fast, intuitive, and easy to use. Companies should utilize mechanisms that self-learn, find relationships between data, classify it, and build semantic fields for the best results.

To meet these needs, Sibedge developed the idea to create a platform that automatically analyzes a repository of corporate documentation, classifies documents, and adds annotations for them. The user interacts with the system in their familiar conversational language, enabling them to quickly find the data they need.

Development Process

To build the platform, we leveraged the machine learning expertise of Sibedge engineers with experience in building virtual assistants for large corporations and government agencies. This experience formed the basis for the development of the user interaction module.

Search Query Module

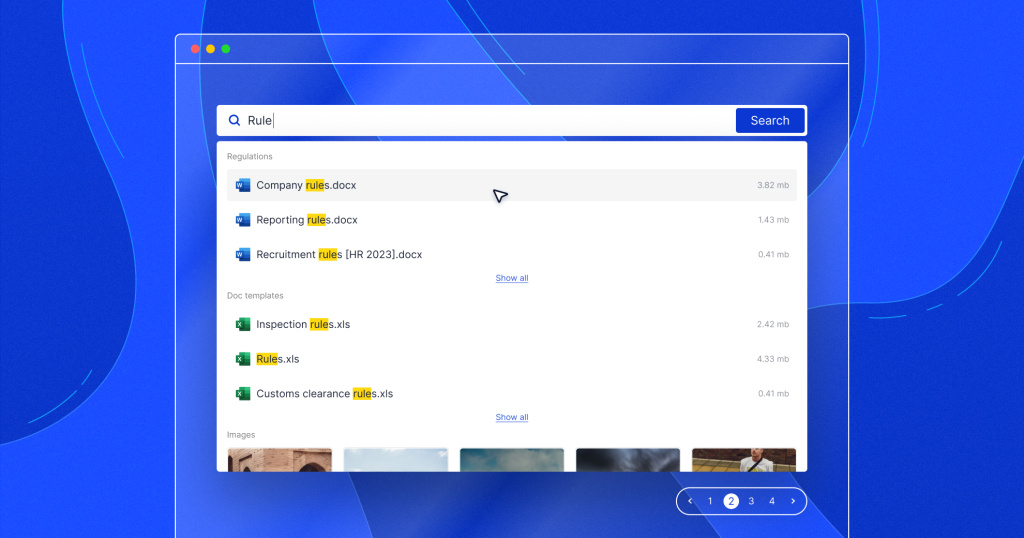

We used NLU (Natural Language Understanding) and NLP (Natural Language Processing) technologies to understand and process natural human language. The user can enter search queries in natural language, and the system provides suggestions based on the user's previous queries and the most popular queries within the organization. The module also displays relevant titles of publicly available documents.

Annotation Module

To speed up the search for the necessary documents, we developed an automatic text annotation module based on a neural network trained on the Latent Dirichlet Allocation (LDA) model. ML engineers loaded 1500 documents into the module, and it automatically segmented them into topics and assigned key (meaningful) words.

The experts then tested the neural network by comparing the resulting annotations with those formulated by the authors of the original documents. They ultimately achieved over 80% accuracy. The module can analyze text without human intervention, extract key words and phrases, and create annotations of up to 600 characters.

During the search, the user sees the documents whose annotations contain the keywords or phrases of interest. This system significantly increases the speed and relevance of the search. The annotation module is based on Python 3.10, FastAPI, NLTK, Gensim and spaCy technologies.

Classification Module

Another module is responsible for document classification, for which we use a neural network trained on the BERT model. This model can effectively handle formalized queries and navigate narrow semantic domains. We performed training on 1500 corporate documents.

The neural network quickly learned to identify the document type with 89% accuracy. It can easily distinguish company policies from resignation letters, vacation requests, and other documents. At the same time, the neural network can be trained to classify other categories of text with ease. For example, it can sort scholarly articles by topics like history, philosophy, politics, sociology, and other sciences.

We used Python 3.10, FastAPI, PyTorch, and Transformers models to create the classification module.

Indexing and Search Module

We chose Elasticsearch as our search engine and made minor modifications to suit our needs. For example, it now supports fragment search in addition to full-text search and annotates and classifies documents during the indexing process. We developed the indexing and search module using Python 3.10, FastAPI, PostgreSQL, and FS Crawler.

Additionally, we developed two utilities to extend the functionality of Elasticsearch for controlling index versions and troubleshooting data migration errors. Another advantage of the solution is that the search system remains available to users in 24/7 mode during the data indexing/reindexing process by activating a backup index at the time of indexing.

We achieved high-speed indexing, annotation, and classification of documents. The table below shows the performance of the platform with different types of documents. There are 1000 documents, and the average file size is 460 KB.

| Document Type | Indexing (min.) | Classification (min.) | Indexing + annotation (min.) | Indexing + Classification + Annotation (min.) |

| TXT format | 16 | 37 | 52 | 74 |

| PDF format | 40 | 54 | 72 | 72 |

| DOCX format | 16 | 22 | 40 | 49 |

| Mixed formats | 25 | 45 | 59 | 79 |

User Interface

Our developers wrote the front-end component using the Vue.js framework. The administrator can use the interface to connect any third-party document repository to the platform, including Google Drive, Dropbox, Bitrix, and others. The administrator also specifies what to do with the information in the repository, whether they want to simply index it or annotate and categorize the text as part of the indexing process.

The user now interacts with a single web interface and search access window for all document repositories connected to the platform. They don't need to navigate different search services, switch between external repositories, or waste time. Searches are performed everywhere at once, across all repositories.

Users can easily customize the design of the web interface to any corporate style, including colors, images, and text labels. Once the design is customized, the interface can easily integrate with any enterprise content management system.

The Result

The platform development was divided into two phases and took one year. A team of 14 specialists worked on the system, including backend and frontend developers, QA specialists, DevOps engineers, ML engineers, and ML analysts. As a result, we created a user-friendly and effective tool that will significantly simplify the process of finding documents and necessary information in corporate document repositories.

Advantages of the platform

- Accurate search based on the meaning and content of the text, not just the file name.

- Single interface for searching documents across all repositories.

- Quick integration with existing corporate infrastructure.

- Convenient system of filters by document type and subject.

We have achieved high quality data analysis with minimal prior training. Neural network models require about 1500 documents and one and a half to two hours of training, after which they show classification and annotation accuracy in the range of 80-89%.

Target audience

The target audience that could benefit from our platform is the commercial sector (B2B), where telecom companies, banks, and large retailers are interested in such solutions. The public sector (B2G) is also interested in these systems, especially ministries, authorities, and budgetary institutions that deal with vast amounts of information on a daily basis and need flexible and convenient tools for searching documents in all repositories.